NVIDIA HGX H100 Server Options

Blackwell Cloud offers customizable server configurations, featuring up to 8x NVIDIA HGX™ H100 GPUs, manufactured by various OEMs.

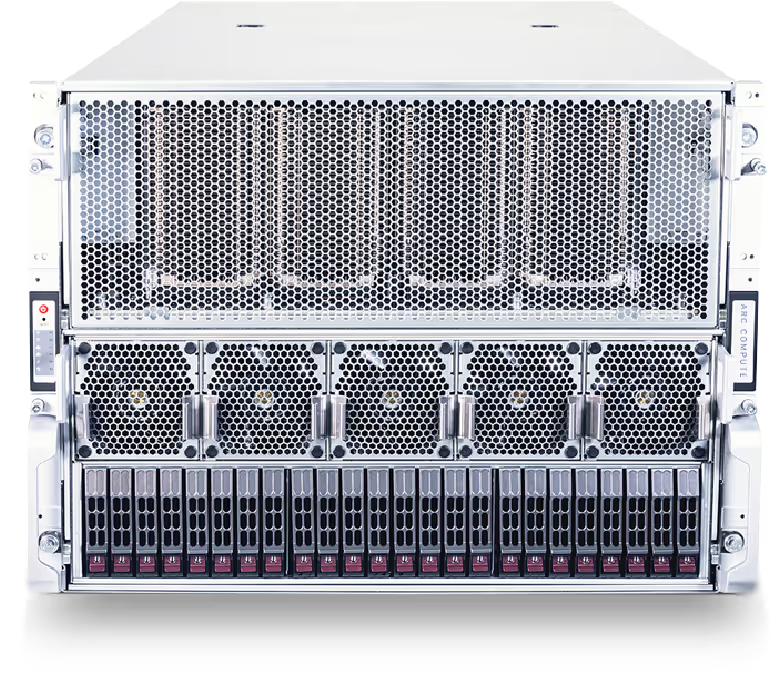

NVIDIA HGX H100 6U

PowerEdge XE9680